Natural Language Processing Meets FDA: My AI Adventure in the Post-Marketing Space

Yong Ma (FDA)

Disclaimer

This article reflects the views of the author and should not be construed to represent FDA’s views or policies.

Highlights:

Below is a summary of some FDA’s examples using natural language processing (NLP) in the post-marketing space over the past few years.

FDA Adverse Event Reporting System (FAERS) Enhancement: Evolving from simple rules to advanced language models, NLP applications have delivered measurable improvements in pharmacovigilance using the FAERS system, going from reducing missing demographics (e.g. age, gender) information, to the conceptual piloting in de-duplicating adverse event reporting.

Electronic Health Record (EHR) Processing: NLP technologies effectively address a fundamental challenge in pharmacoepidemiology by extracting meaningful data from unstructured physician notes. This capability was demonstrated through successful implementations in anaphylaxis identification and extracting outcomes and potential confounders information in the Multi-source Observational Safety study for Advanced Information Classification using NLP (MOSAIC-NLP) project.

Social Media Surveillance for Public Health: During public health emergencies, NLP models may analyze social media narratives to identify disease trends and symptoms in real-time. FDA’s intermural research demonstrated that NLP models such as Bidirectional Encoder Representations from Transformers (BERT) can successfully extract COVID infections and symptoms from Reddit posts.

When ChatGPT suddenly became the talk of every conference, coffee break, and dinner party in recent years, I found my FDA colleagues were also chatting: what is the FDA doing with this new technology at work? Is it going to be a useful tool? Or is it going to replace us?

Reassuringly, my experience with AI in the post-marketing surveillance landscape confirmed that we’ve been experimenting with NLP for the past few years— before it became the must-have technology that everyone claims they’re “leveraging.” While the world was just discovering ChatGPT’s charm, we had already been extracting key information from texts, by using something simple as a rule-based algorithm, to much more sophisticated language models.

At the FDA’s Center for Drug Evaluation and Research (CDER), statisticians support post-marketing drug safety surveillance and work closely with our colleagues in the divisions of Pharmacovigilance and divisions of Pharmacoepidemiology at the Office of Surveillance and Epidemiology. Over the years, our roles went beyond those of traditional statisticians as we stepped into the wonderful world of natural language processing.

In this article, I’ll take you behind the regulatory curtain to share our encounters with AI, revealing the surprising fact that NLP has been our workplace companion all along. These projects span from 2018 to present and are organized by research area: pharmacovigilance (project 1-2), pharmacoepidemiology (project 3-4) and public health emergency (project 5).

1. Capturing key missing demographic information from the fixed field in FAERS report using case narratives

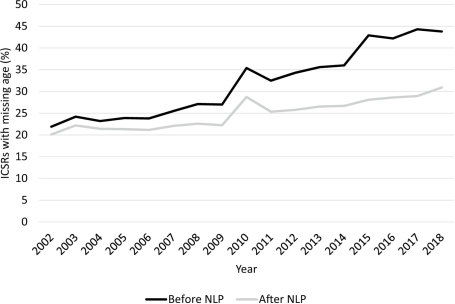

My first encounter with language processing was back in 2018 when our team was asked to help evaluate an algorithm developed to capture missing age data in the FDA’s adverse event reporting system (FAERS). FAERS, a spontaneous reporting system capturing adverse events associated with drug use and medication error, is the corner stone in pharmacovigilance. Demographic information, such as age, sex and race/ethnicity, are usually captured in the fixed fields. However, many adverse event reports were missing patient age information in their structured data fields, and it is worsening over time - the percentage of reports with missing age data doubled from about 22% in 2002 to nearly 44% in 2018. This created real issues, especially when trying to monitor pediatric safety where age is critical.

My epidemiologist colleagues tackled this with a simple NLP approach. A rule-based algorithm (Wunnava S, 2017) was built to search for numbers followed by key words like “years” or “years old”, “months” etc. and converted those to years. The tool would extract the first age it found in each report’s narratives. This straightforward approach didn’t need any training data and could work across different FAERS runs.

Although we were not involved with the development of the algorithm, we were asked to help develop a validation study to evaluate the performance of the algorithm. We worked with our pharmacovigilance colleagues and designed a study to test this tool on 1,500 randomly selected reports (Pham P, 2021). The algorithm correctly identified 98.5% of ages that were present in the narratives. It also avoided false alarms 92.9% of the time, meaning it rarely claimed to find an age when there wasn’t one. When it identified an age, it was right 94.9% of the time.

When we applied this tool to the entire FAERS database covering 2002 to 2018, we extracted age information for an additional one million reports. This brought the overall percentage of reports missing age data down from 37% to 27% (Figure 1). The impact was especially notable for pediatric cases, where they more than doubled the number of reports with known ages for children under 6 years old.

Figure 1. Percentage of FAERS ICSRs with missing age before and after NLP implementation. FAERS FDA Adverse Event Reporting System, ICSR individual case safety report, NLP natural language processing (Reprinted from (Pham P, 2021) under CC BY‑NC 4.0)

We noted that although we could bring down the missingness in the structured age data from 37% to 27% by supplementing data from the non-structured field, 27% is still substantially high. This high number is largely because age is simply not entered into the FAERS report. In such a case, NLP has reached its limit, and efforts should be directed to ensure better data entry. This demonstrates the ultimate limit of NLP – when there is no information, NLP won’t be helpful. We also noticed this phenomenon when we tried to capture other demographic data, specifically gender, weight, race, and ethnicity from the unstructured field (Dang V, 2022). A rule-based NLP mini-algorithm for each demographic variable was developed to be tailored to each specific feature. The gender algorithm, for instance, looked for terms like “male,” “female,” “his,” and “her,” while the weight algorithm hunted for numbers followed by units like “lb” or “kg.” The gender extraction tool performed well with 98.6% sensitivity and helped reduce missing gender data in FAERS by a 33%— over 470,000 reports found to contain usable gender data hidden in the text. Unfortunately, the weight, race, and ethnicity algorithms showed high specificity but low sensitivity—not because the tool underperformed, but because the information just wasn’t there. It turns out, you can’t extract what doesn’t exist.

2. An Evaluation of Duplicate Adverse Event Reports Characteristics in the Food and Drug Administration Adverse Event Reporting System

Our journey with pharmacovigilance continued. In 2023, we were asked to help with one of the long-standing challenges in post-market drug surveillance - determining when multiple adverse event reports in FAERS describe the same event. It’s not uncommon for different reporters—patients, physicians, manufacturers—to submit slightly different narratives for what may be the same case. These duplicates can inflate counts, distort safety signal, and make safety signal detection more challenging. While our pharmacovigilance colleague provided reports already identified manually as duplicates, our task was to see if narrative-level similarity in the duplicate reports were indeed distinguishable from the non-duplicate reports (Janiczak S, 2025) .

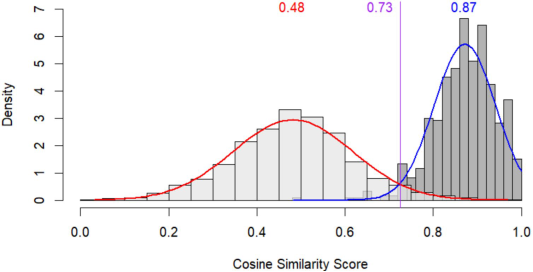

To tackle this, we deployed Sentence-BERT (SBERT)—a model designed to convert sentences into embeddings that capture semantic meaning. Using the all-MPNet-base-v2 variant, we transformed each report narrative into a vector and then measured cosine similarity between pairs of narratives. If the narratives were telling the same story (e.g., “the patient developed a rash and shortness of breath” vs. “rash and trouble breathing began after dose”), they’d show up as close in this vector space and have a cosine similarity close to 1. We found that confirmed duplicate reports had a median cosine similarity of 0.87, while random non-duplicate pairs had a median of just 0.48. As shown in Figure 2, with a threshold of 0.73 as a classifier, we could achieve a sensitivity of 96% and specificity of 96%.

The cosine similarity measure appears to be a promising tool facilitating duplicate identification; however, certain practical considerations remain. Computing all possible pairwise similarities across the massive FAERS database would require large computing power and time and may not be feasible; model and threshold need to be carefully chosen. Plus, while narrative similarity is a powerful flag, it doesn’t replace expert review or structured field analysis. Instead, this method may serve as a decision support tool: a fast, consistent way to surface likely duplicates that can then be reviewed more carefully.

Figure 2: Distribution of cosine similarity analysis of narrative text. reprinted from: (Janiczak S, 2025) Licensed under CC BY 4.0.

3. Improving Methods of Identifying Anaphylaxis for Medical Product Safety Surveillance Using Natural Language Processing and Machine Learning

If capturing missing data and de-duplicate reports from FAERS narrative are relatively simple with NLP application, this third project has certainly taken a big step forward. This study (Carrell DS, 2023) addressed the critical challenge of accurately identifying anaphylaxis events in electronic health records for FDA medical product safety surveillance. Anaphylaxis is a rare but severe, potentially life-threatening allergic reaction with rapid onset. It is often caused by medications, food, or other exposures. Lifetime anaphylaxis prevalence estimates in the US range from 0.05% to 2% and incidence is increasing. Anaphylaxis mortality rates are increasing for medication-induced cases. The FDA’s Sentinel Initiative monitors medical product safety using real-world data through the Active Risk Identification and Analysis (ARIA) System. However, it has been insufficient for identifying anaphylaxis due to the condition’s complex clinical presentation and its reliance on structured medical claims data. Existing automated algorithms, including the 2013 Walsh algorithm, achieved only 63% positive predictive value when identifying anaphylaxis events. This falls short of the commonly used ≥80% threshold for FDA ARIA analyses. This identification challenge stems from several factors. Anaphylaxis has diverse clinical presentations. There are frequent “rule-out” coding practices. Diagnosis codes show high sensitivity but low specificity. These issues create a major barrier to effective disease surveillance and prevent clinicians from identifying actionable health risks.

To overcome these limitations, the study team developed machine learning algorithms incorporating NLP. The goal was to better discriminate between actual and potential anaphylaxis events using rich electronic health record text data. The NLP methodology included creating a custom dictionary of anaphylaxis-related concepts through clinical expert review. The study team also augmented this with Unified Medical Language System (UMLS) concepts from published literature. The dictionary was enriched with synonyms and misspellings discovered through manual chart review. A locally developed NLP system, like Apache cTAKES, identified dictionary terms in clinical notes. It used a tailored ConText algorithm to distinguish affirmative mentions from negated, historical, or hypothetical references. The team manually engineered 468 candidate NLP-derived covariates. These included rules for multi-organ system involvement, symptom categories, normalized mention counts, and treatment indicators. Ultimately 100 covariates were selected through expert judgment and frequency analysis and added to a prediction model already containing structured data.

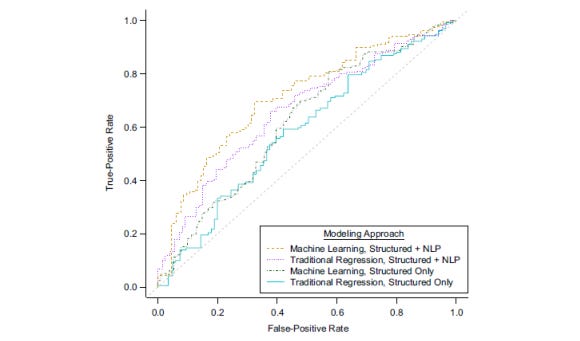

The NLP-enhanced models significantly outperformed structured data-only approaches. The best performing model achieved a cross-validated area under the curve (AUC) of 0.71 compared to 0.62 for structured data alone (Figure 3). At a classification threshold yielding 66% cross-validated sensitivity, the model achieved 79% cross-validated positive predictive value. This represents a substantial improvement over existing methods.

Figure 3. Weighted cross-validated area under the receiver operating characteristic curve for Kaiser Permanente Washington algorithms identifying actual anaphylaxis events in Kaiser Permanente Washington data (2015–2019) using the best machine-learning approach applied to structured and all natural language processing (NLP) data, traditional logistic regression approach applied to structured and all NLP data, machine-learning approach applied to structured data only, and traditional logistic regression approach applied to structured data only. (Figure reproduced from: (Carrell DS, 2023) Reused under the terms of the Creative Commons Attribution License.)

4. Natural Language Processing in Pharmacoepidemiology: Lessons from the Multi-Source Observational Safety study for Advanced Information Classification Using NLP (MOSAIC-NLP)

FDA’s Sentinel initiative integrates innovation to drug safety monitoring and the Multi-Source Observational Safety study for Advanced Information Classification Using NLP (MOSAIC-NLP) project applied NLP in pharmacoepidemiology (Jaffe, 2024). When using real-world data (RWD) from electronic health records (EHRs), important information on confounders and outcomes is contained in clinical notes. The MOSAIC-NLP study demonstrated the feasibility of applying NLP to a data set including 17+ million notes from over 100 healthcare systems to extract key information on outcomes and potential confounders. In this retrospective cohort study, the study team examined EHR-claims linked structured and unstructured data (2015-2022) from multiple national sources. Patients with asthma newly initiated montelukast (monotherapy) were compared to those who initiated inhaled corticosteroids for their neuropsychiatric events.

The study found that including structured and unstructured EHR data significantly increased the number of detected suicidality and self-harm events related to both mediations, both at baseline and during the follow up. Other baselines covariate information such as GERD, Cough, COPD and substance abuse was also captured more. The broadened scope and scale of clinical information extracted from the structured and unstructured EHR data enriched the measurement of patient and disease characteristics and enhanced the strength and accuracy of risk estimates, compared to that from the claims data alone. Although the finds on the association between montelukast use and neuropsychiatric events did not differ from prior studies, integrating relevant entities extracted from clinical text using NLP added extra evidence and strength to the study conclusion.

5. Identifying COVID-19 cases and extracting patient reported symptoms from Reddit

In 2021, as the COVID-19 pandemic continued to unfold, traditional surveillance systems struggled to keep pace with real-time symptom reporting, especially from underrepresented or non-clinical populations. Meanwhile, millions of people were openly sharing their symptoms, frustrations, and theories on social media platforms like Reddit. Epidemiologists at the FDA saw an opportunity: could social media be used to provide meaningful health data—specifically, COVID-19 case identification and patient-reported symptoms? And we statisticians quickly pitched in by approaching this with automation so that we were not limited to the cumbersome manual process. The goal was to develop a fully automated, scalable method to detect self-reported COVID-19 cases and extract symptoms with clinical relevance (Guo M, 2023).

To accomplish this, we built a two-stage NLP pipeline. First, we tackled case identification using a BERT-Large model, applied to comments from a “COVID” sub-Reddit users which was aggregated into “author documents.” These were split into 512-token segments (due to BERT’s limit) and then encoded and passed through a neural network classifier that aggregated the chunk-level outputs. The model achieved 91.2% accuracy in distinguishing COVID-positive, demonstrating robust performance despite the presence of colloquial language, sarcastic expressions, and the prevalence of unsubstantiated claims regarding the pandemic.

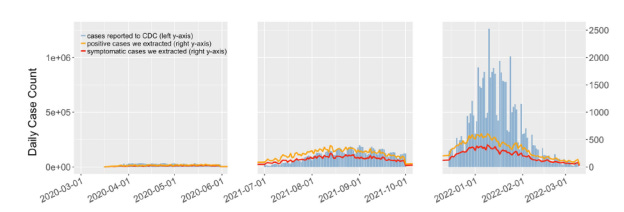

Once positive cases were flagged, the next challenge was to extract symptoms from unstructured, often creatively phrased narratives. For this, we introduced QuadArm, a four-step NLP framework. It began with a BERT/BioBERT-based question-answering model to identify rough symptom mentions. These were expanded using word embeddings (GoogleNews word2vec) to capture related keywords and modifiers—so the model could learn that “burning lungs” and “tight chest” might live in the same semantic neighborhood. The refined symptom phrases were then clustered using Adaptive Rotation Clustering (ARC), which dynamically groups similar terms without needing to predefine the number of clusters. Finally, the clusters were mapped to standardized UMLS concepts, translating Reddit slang into medically meaningful terms. In the end, this NLP approach revealed evolving symptom trends across the pandemic’s early, Delta, and Omicron waves—showing, for example, a drop in loss of smell and a rise in sore throat, consistent with CDC reports. The study demonstrates that with the right combination of transformer models, semantic feature expansion, and optimized clustering methodologies, social media discourse can be systematically analyzed to extract clinically relevant information.

Figure 4. Daily trends in number of COVID-19 cases reported to the CDC and we extracted, for the

corresponding three periods. (Reused from (Guo M, 2023), under CC BY 4.0)

Reflecting on the projects I’ve worked on, NLP appeared to be a powerful tool and can be applied in pharmacovigilance and pharmacoepidemiology, or public health emergency. While the text narrative could come from different sources: spontaneous reporting for pharmacovigilance, doctors’ notes for pharmacoepidemiology, social media posting for public information, all require efficient automated text processing to extract key information accurately. Language modeling tools demonstrate significant potential for these applications, and I anticipate expanded utilization of evolving natural language processing technologies, with continued algorithmic improvements contributing to enhanced public health outcomes.

Reference:

Carrell DS, G. S.-H. (2023). Improving Methods of Identifying Anaphylaxis for Medical Product Safety Surveillance Using Natural Language Processing and Machine Learning. Am J Epidemiol., 192(2), 283-295.

Dang V, W. E. (2022). Evaluation of a natural language processing tool for extracting gender, weight, ethnicity, and race in the US food and drug administration adverse event reporting system. Front. Drug Saf., 2 - 2022.

Guo M, M. Y. (2023). Identifying COVID-19 cases and extracting patient reported symptoms from Reddit using natural language processing. Sci Rep., 13(1).

Jaffe, D. (2024). Retrieved from https://www.sentinelinitiative.org/news-events/publications-presentations/natural-language-processing-pharmacoepidemiology-lessons

Janiczak S, T. S. (2025). An Evaluation of Duplicate Adverse Event Reports Characteristics in the Food and Drug Administration Adverse Event Reporting System. Drug Saf.

Pham P, C. C. (2021). Leveraging Case Narratives to Enhance Patient Age Ascertainment from Adverse Event Reports. Pharmaceut Med, 35(5), 307-316.

Wunnava S, Q. X. (2017). Towards transforming FDA adverse event narratives into actionable structured data for improved pharmacovigilance. 2017 Proceedings of the symposium on applied computing, (pp. 777–82).

This is such a brilliant overview; it's so encouraging to see the FDA embracing advanced AI like this for public safety. I'm realy interested in the 'conceptual piloting in de-duplicating adverse event reporting' – could you elaborate a bit more on the specific NLP techniques being explored for that?